Safer AI by Design: Strengthening Child Protection in the Age of Chatbots

Written: September 2025 · Published: January 2026

This article is adapted from earlier academic work and has been edited for a general audience.

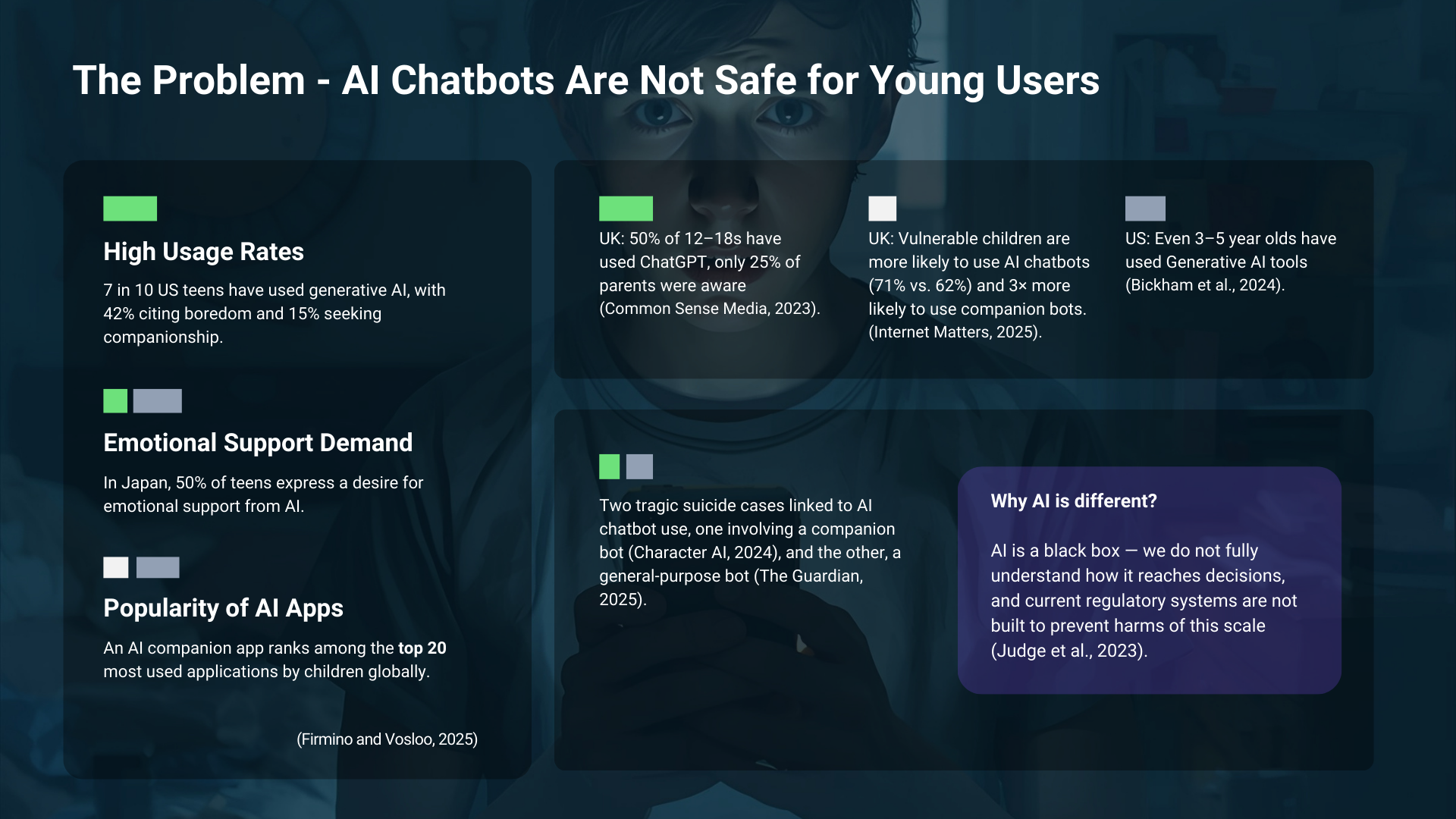

Children are already using conversational AI systems at scale, including systems designed for general audiences rather than young users. This creates a growing mismatch between how these tools are used in daily life and what current policy frameworks are actually designed to address. This is not only a technical issue but a social problem, because it reshapes how children seek support, build trust, learn norms, and form emotional attachments, often in private and without adult awareness. When systems that simulate conversation and companionship become part of childhood, the impacts reach beyond individual users into education, family life, mental health, and long-term social development.

This article examines how we regulate AI systems that interact with children. While current EU regulations like the Digital Services Act (DSA) offer some safeguards, they do not fully address the emotional and psychological risks posed by interactive AI systems. It outlines where the current approach falls short and proposes directions for strengthening protection for vulnerable users, starting with children.

The problem area: children are using chatbots in deeply human ways

Scale and context

Children are not only experimenting with AI. Many are turning to it as a companion, a confidant, or a source of personal advice. Evidence across countries suggests widespread adoption among teenagers, limited parental visibility, and usage beginning at surprisingly young ages. The pattern that matters most is not simply that children are using AI, but how they are using it, often in emotionally complex and unsupervised contexts.

Why this is not "just another online risk"

Two dynamics make conversational AI different from many prior online safety issues. First, there is an emotional and relational layer: children can approach chatbots as if they were a person, and the system can mimic empathy without possessing it, creating an "empathy gap" where the interaction feels supportive but can become unsafe or misleading. Second, there is unpredictability. Many AI systems can produce confident outputs without stable guarantees about what they will say next, and children can take outputs literally, treat them as authoritative, or become emotionally dependent on them.

This is not only theoretical. In 2024 and 2025, lawsuits were filed by families after teenage children died by suicide following prolonged interactions with mainstream chatbots, including a companion-bot case involving Character.AI and subsequent scrutiny involving a general-purpose chatbot.

The deeper policy challenge: a black box at scale

A key difficulty is that these systems can behave as "black boxes" at scale. We do not always understand why a model produced a given response, and harms can emerge through interaction dynamics rather than a single, easily testable failure. This is not how most regulatory systems were built to work, and it creates an urgent need for stronger safeguards, greater transparency, and systems designed to be safe before they reach children in real-world use.

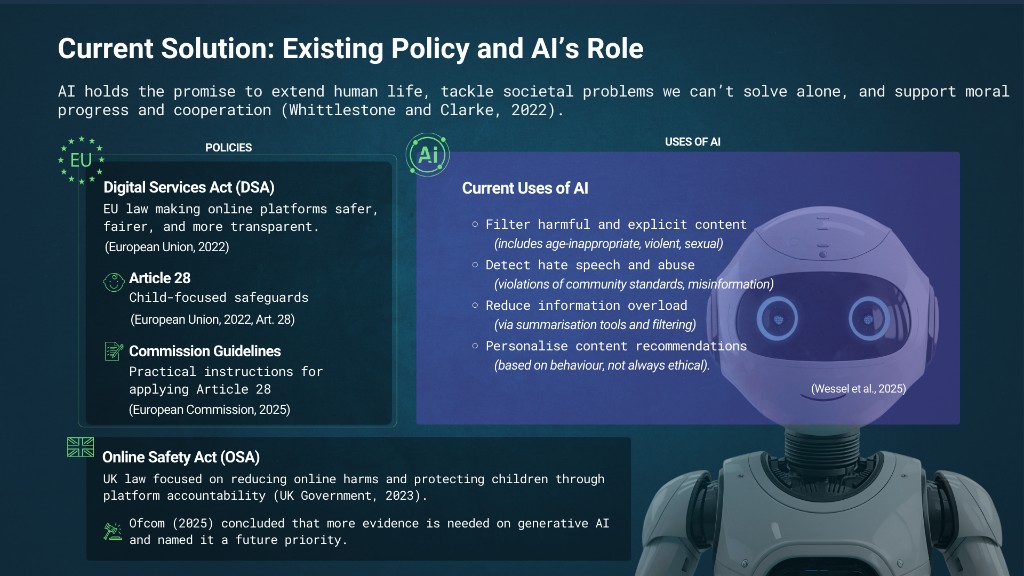

The current policy framework: what exists, and what it assumes

The Digital Services Act and Article 28

The Digital Services Act (DSA) is the EU's central online safety law, adopted in 2022 and fully applied from 2024. It aims to reduce illegal and harmful content, protect fundamental rights, increase platform accountability, and improve transparency.

Within the DSA, Article 28 introduces special obligations related to minors, including safeguards to protect children's privacy, safety, and healthy development. The European Commission has also published guidelines describing how Article 28 should be applied in practice.

The role of AI under today's approach

In theory, platforms already use AI to support online safety efforts. AI tools can be used to filter harmful and age-inappropriate content, detect abuse or grooming risks, and flag potentially harmful material. This creates an attractive narrative: if AI contributes to the problem, AI can also help enforce compliance.

The question is whether current rules and enforcement models are equipped to address the emotional and psychological dimensions of conversational AI used by children.

The UK parallel: the Online Safety Act

The UK's Online Safety Act aims to reduce online harms and protect children via platform accountability. Ofcom has concluded that more evidence is needed to understand generative AI risks and how to manage them effectively, naming generative AI as a priority area for future consideration.

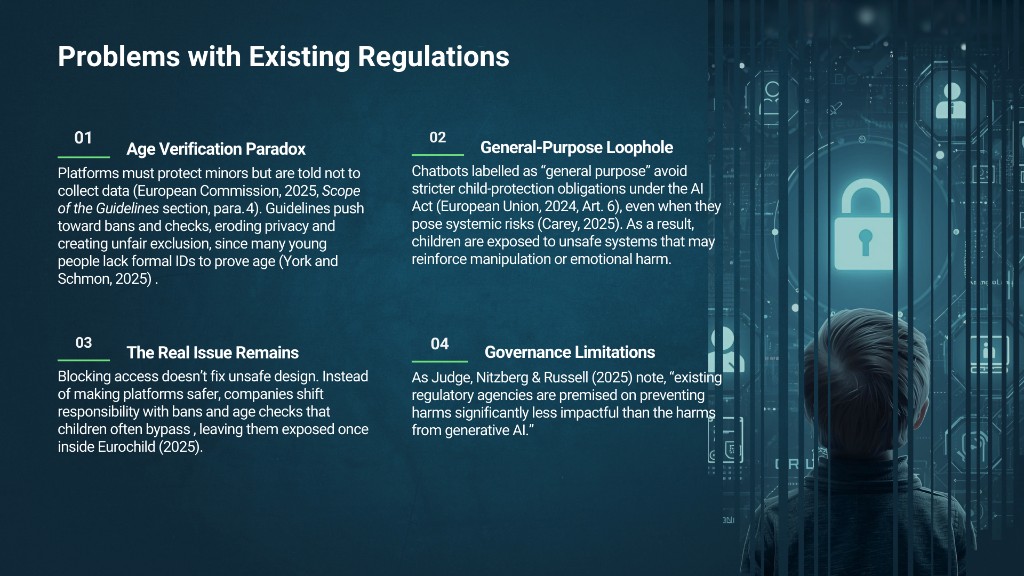

Where current policy falls short

Despite child-focused duties under the DSA, several gaps remain.

The age verification paradox

Platforms are expected to protect minors while also being discouraged from collecting additional identifying data. In practice, many services rely on self-declaration. Moves towards stricter age assurance can create exclusion risks for users without formal identification and raise privacy trade-offs.

The problem remains intact

Even if age gates improve, they do not fix harmful design features that can exist inside the product experience, such as dependency-forming interaction patterns or recommendation logic that prioritises engagement.

The general-purpose loophole

General-purpose chatbots can avoid stricter child-protection expectations, even when children use them as companions. The result is that child exposure remains high while regulatory responsibilities stay comparatively light.

Enforcement gaps and jurisdiction limits

Cross-border access complicates enforcement. Providers outside the EU can be difficult to regulate in practice, weakening the protective promise of EU safeguards when real-world access remains wide.

Access with minimal barriers

Even relatively simple AI systems have produced unsafe outputs. In fully conversational systems, risks expand because interaction is long-form, adaptive, and relational. This is where children can be drawn into emotional reliance without a single clear "content violation" event to flag.

Overall, these gaps suggest the current framework was not built for how children are actually using AI chatbots, and child safety cannot be achieved through access controls alone.

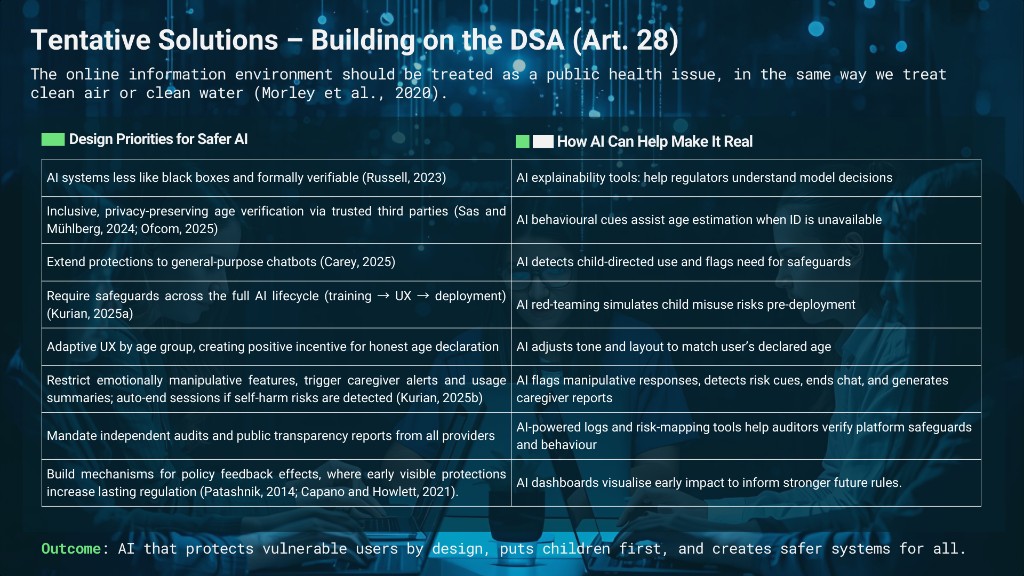

A complementary proposal: child safety across the AI lifecycle

The core shift is a change in mindset: instead of relying mainly on bans and age verification, conversational AI should be made safer by design, especially where children are likely to access it.

Safeguards across the lifecycle

Safeguards should be embedded across the AI lifecycle, from datasets and training decisions through to interface design and deployment. Safety is built in, not added afterwards.

Adaptive user experiences rather than bans

Instead of treating "under 18" as one category, systems should provide age-appropriate experiences. A seven-year-old and a sixteen-year-old are both minors, but their needs and vulnerabilities differ.

No manipulative intimacy

Conversational systems should be restricted from features that simulate intimacy, encourage emotional dependency, or exploit anthropomorphism in ways that mislead vulnerable users.

Privacy-preserving age assurance and incentives for honesty

Age assurance should be privacy-preserving wherever possible, including the use of trusted third parties. Self-declaration should be designed with incentives for honesty, so declaring a true age leads to a safer, better experience rather than a worse one.

Parental controls, reporting tools, and safety affordances

Practical features matter: parental controls that allow consent to be verified, adjusted, and revoked; reporting tools children can actually use; proactive detection of unsafe patterns; child-safe recommender design prioritising wellbeing over engagement; warning and filtering tools; and a "panic button" for immediate exit and reporting.

Oversight and global reach

This approach also requires: mandatory audits and third-party transparency reports; escalation and caregiver notification pathways where serious risks are detected; and duties extending to non-EU providers, with publication requirements for safeguards and access consequences for non-compliance.

The intended outcome is AI that protects vulnerable users by design and makes systems safer for everyone.

Limits and trade-offs

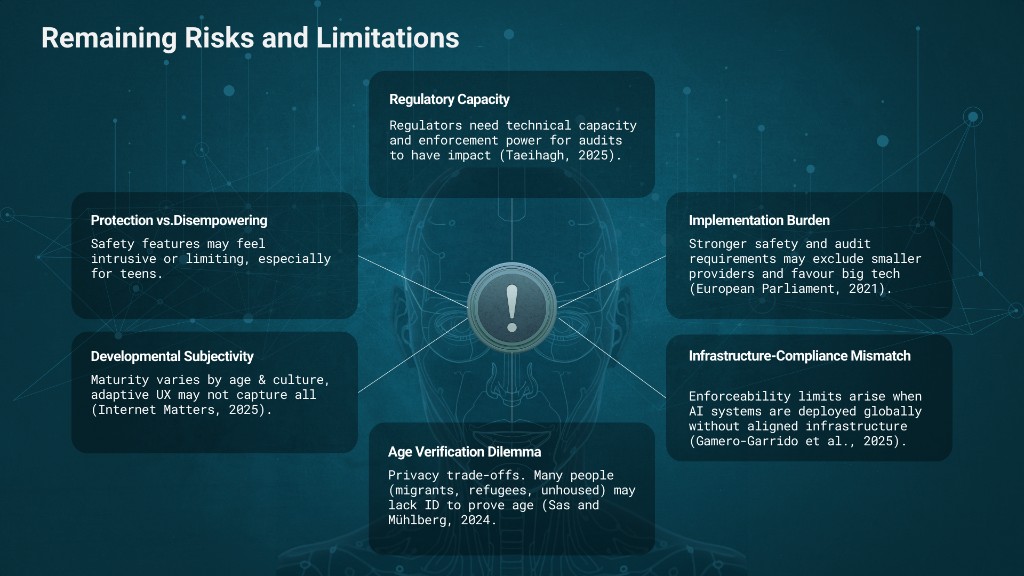

Under any proposal in this space, there are constraints and trade-offs:

- Audits only work if regulators have the technical capacity and enforcement power to evaluate systems and act on findings.

- Non-EU providers and VPN access make cross-border enforcement difficult in practice.

- Adaptive UX and safety features may feel intrusive or restrictive, especially for older teenagers.

- Lifecycle safeguards and audit requirements can impose burdens that smaller developers struggle to meet.

- Maturity varies by age, culture, and experience, so age-based adaptations may not capture all developmental differences.

- Privacy trade-offs around age verification persist, and many residents in Europe may lack formal ID to prove age.

These limitations do not undermine the need for safeguards. They underline why child protection policy must be iterative, inclusive, and tested against real-world conditions.

Closing: what would it mean to design AI for a child?

A useful framing question sits underneath the entire argument: what would it mean to design AI not with an adult in mind, but for a child? Conversational AI changes the nature of risk. Harms are not only about content but about interaction, dependency, persuasion, and uncertainty, unfolding over time and often out of sight. If the online information environment is treated as a public health issue, child safety cannot depend mainly on warnings and access restrictions. It must be embedded in how systems are built, audited, and governed from the start. Children's safety needs to be an architectural requirement, not a policy afterthought.

References

Bickham, D.S., Schwamm, S., Izenman, E.R., Yue, Z., Carter, M., Powell, N., Tiches, K. and Rich, M. (2024) Use of voice assistants & generative AI by children and families. Boston: Boston Children's Hospital Digital Wellness Lab.

Capano, G., Howlett, M. and Ramesh, M. (2015) 'Bringing governments back in: Governance and governing in comparative policy analysis', Journal of Comparative Policy Analysis, 17(4), pp. 311–321. https://doi.org/10.1080/13876988.2015.1031977 (Accessed: 13 September 2025).

Carey, S. (2025) 'Regulating Uncertainty: Governing General-Purpose AI Models and Systemic Risk', European Journal of Risk Regulation, pp. 1–17. https://doi.org/10.1017/err.2025.10040.

Common Sense Media (2023) Impact Research report. https://www.commonsensemedia.org/sites/default/files/featured-content/files/common-sense-ai-polling-memo-may-10-2023-final.pdf (Accessed: 20 August 2025).

Eurochild (2025) The rights of children in the digital environment. Eurochild. https://eurochild.org/resource/the-rights-of-children-in-the-digital-environment/ (Accessed: 21 August 2025).

European Commission (2025) Approval of the content on a draft Communication from the Commission – Guidelines on measures to ensure a high level of privacy, safety and security for minors online, pursuant to Article 28(4) of Regulation (EU) 2022/2065. C(2025) 4764 final. Brussels: European Commission. https://digital-strategy.ec.europa.eu/en/library/commission-publishes-guidelines-protection-minors (Accessed: 21 August 2025).

European Parliament (2021) EU Artificial Intelligence Act: Initial appraisal of a European Commission impact assessment. Brussels: European Parliamentary Research Service (EPRS). https://www.europarl.europa.eu/RegData/etudes/BRIE/2021/694212/EPRS_BRI(2021)694212_EN.pdf (Accessed: 12 September 2025).

European Union (2022) Regulation (EU) 2022/2065… Digital Services Act and amending Directive 2000/31/EC. Official Journal of the European Union, L 277, pp. 1–102. https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX%3A32022R2065 (Accessed: 21 August 2025).

European Union (2022) Regulation (EU) 2022/2065 — Article 28: Protection of minors online. Official Journal of the European Union, L 277, pp. 1–102. https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX%3A32022R2065#d1e4100-1-1 (Accessed: 21 August 2025).

European Union (2024) Regulation (EU) 2024/1689… Artificial Intelligence Act. Official Journal of the European Union, L 2024/1689. https://artificialintelligenceact.eu/article/6/ (Accessed: 21 August 2025).

Firmino, S. and Vosloo, S. (2025) The risky new world of tech's friendliest bots. UNICEF Innocenti (Ideas and articles), 9 July. https://www.unicef.org/innocenti/stories/risky-new-world-techs-friendliest-bots (Accessed: 22 August 2025).

Gamero-Garrido, A., Pearce, A., Kreitz, G., Sundaresan, S., Levin, D. and Ensafi, R. (2025) 'Empirically measuring data localization in the EU', arXiv preprint, arXiv:2504.09019. https://arxiv.org/abs/2504.09019 (Accessed: 12 September 2025).

Internet Matters (2025) Me, Myself and AI: Understanding children's and young people's use of AI chatbots. https://www.internetmatters.org/hub/research/me-myself-and-ai-chatbot-research/ (Accessed: 12 September 2025).

Judge, B., Nitzberg, M. and Russell, S. (2025) 'When code isn't law: rethinking regulation for artificial intelligence', Policy and Society, 44(1), pp. 85–97. https://doi.org/10.1093/polsoc/puae020.

Kurian, N. (2025a) 'Developmentally aligned AI: a framework for translating the science of child development into AI design', AI Brain and Child, 1(1), p. 9. https://doi.org/10.1007/s44436-025-00009-z.

Kurian, N. (2025b) 'AI's empathy gap: The risks of conversational Artificial Intelligence for young children's well-being and key ethical considerations for early childhood education and care', Contemporary Issues in Early Childhood, 26(1), pp. 132–139. https://doi.org/10.1177/14639491231206004.

Morley, J., Cowls, J., Taddeo, M. and Floridi, L. (2020) 'Public health in the information age: Recognizing the infosphere as a social determinant of health', Journal of Medical Internet Research, 22(8), e19311. https://doi.org/10.2196/19311 (Accessed: 21 August 2025).

Ofcom (2025) Guidance on highly effective age assurance (Part 3: services), updated version published 24 April. https://www.ofcom.org.uk/siteassets/resources/documents/consultations/category-1-10-weeks/statement-age-assurance-and-childrens-access/part-3-guidance-on-highly-effective-age-assurance.pdf (Accessed: 22 August 2025).

Ofcom (2025) Protecting children from harms online. Volume 2: The causes and impacts of online harms to children. 24 April. https://www.ofcom.org.uk/siteassets/resources/documents/consultations/category-1-10-weeks/statement-protecting-children-from-harms-online/main-document/volume-2-the-causes-and-impacts-of-online-harms-to-children.pdf (Accessed: 12 September 2025).

Patashnik, E.M. (2014) Reforms at Risk: What Happens After Major Policy Changes Are Enacted. Princeton: Princeton University Press.

Russell, S. (2023) Written testimony. Hearing before the Committee on the Judiciary, United States Senate, 26 July. https://www.judiciary.senate.gov/imo/media/doc/2023-07-26_-_testimony_-_russell.pdf (Accessed: 22 August 2025).

Taeihagh, A. (2025) 'Governance of generative AI', Policy and Society, 44(1), pp. 1–22. https://doi.org/10.1093/polsoc/puaf001 (Accessed: 12 September 2025).

UK Government (2023) Online Safety Act 2023. https://www.legislation.gov.uk/ukpga/2023/50/contents/enacted (Accessed: 11 September 2025).

Wessel, M., Wenzel, M., Witte, C., Yigitcanlar, T. and Recker, J. (2025) 'Generative AI and its transformative value for digital platforms', Journal of Management Information Systems, 42(2), pp. 346–369. https://doi.org/10.1080/07421222.2025.2487315.

Whittlestone, J. and Clarke, S. (2022) 'AI challenges for society and ethics', in Bullock, J.B., Zhang, B., Cugurullo, F. and Yigitcanlar, T. (eds) The Oxford handbook of AI governance. Oxford: Oxford University Press, pp. 45–64. https://doi.org/10.1093/oxfordhb/9780197579329.013.3 (Accessed: 21 August 2025).

York, C.S. and Schmon, C. (2025) Just banning minors from social media is not protecting them. Deeplinks Blog, Electronic Frontier Foundation, 28 July. https://www.eff.org/deeplinks/2025/07/just-banning-minors-social-media-not-protecting-them (Accessed: 21 August 2025).